Table of Contents

Diffusion model

Signal Detection Theory can be used to get bias-free measures of subject's sensitivity, predict performance on a two alternative forced choice and has been quite useful both in psychophysics and in cognitive neuroscience where it is often used to link physiology to behavior. But, one thing it completely sweeps under the carpet is time. There is no notion of how the decision process evolves over time and so therefore it has nothing to say about reaction times. So, diffusion models.

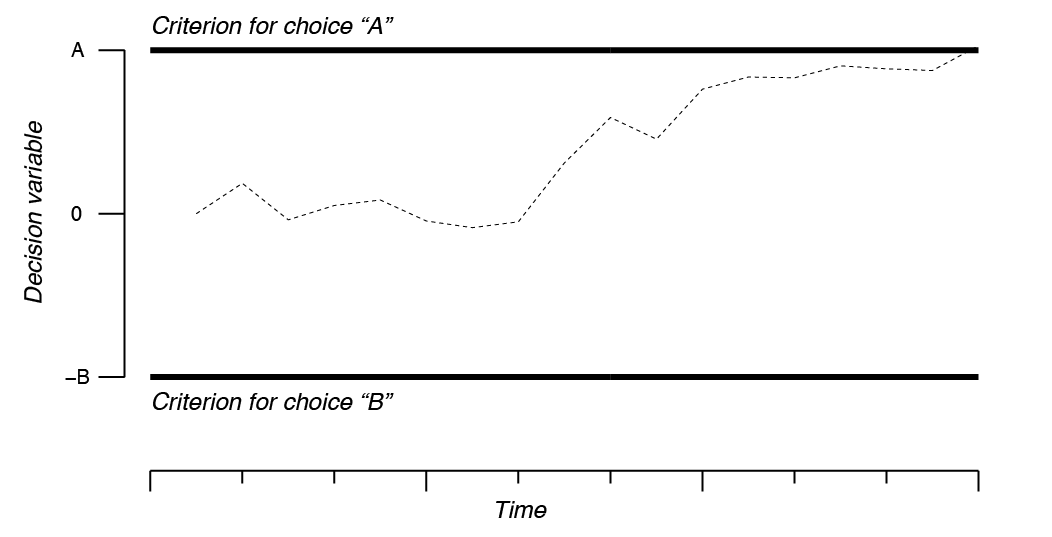

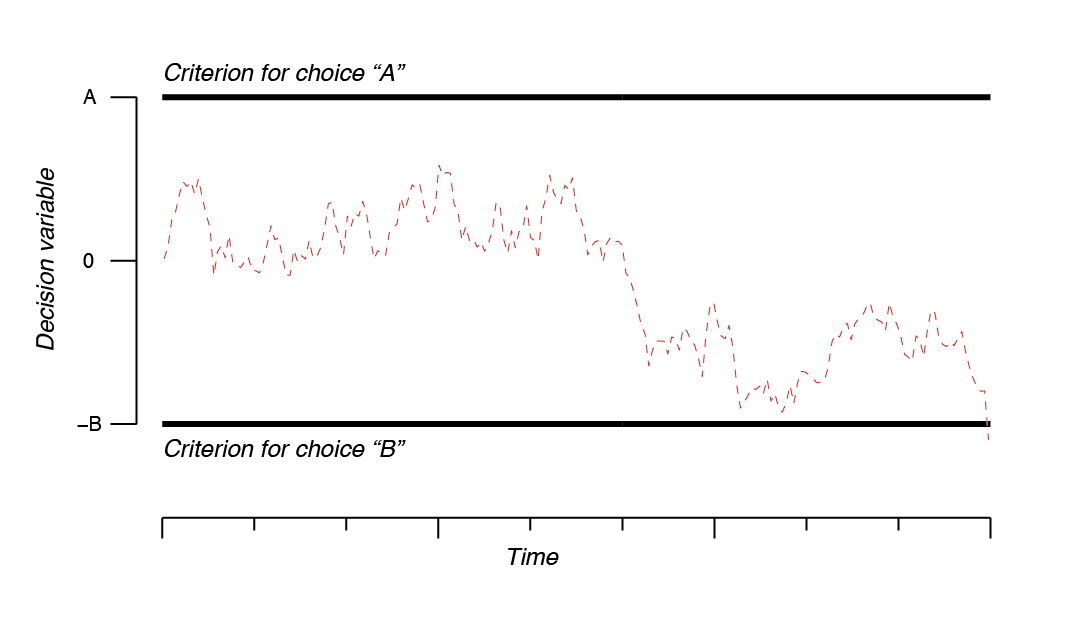

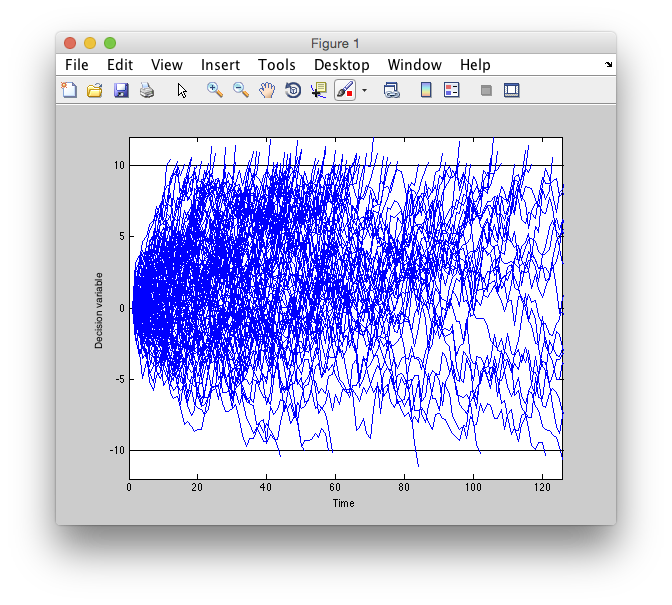

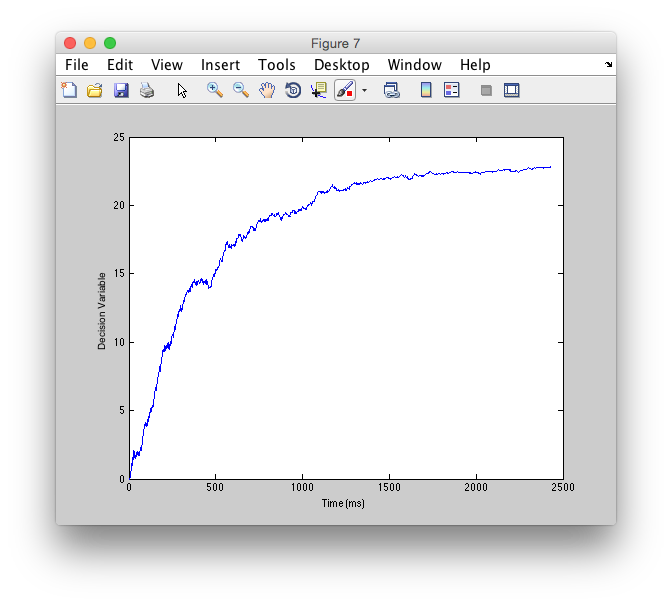

There are many variations of diffusion models, but the basic flavor is to think of a decision variable which accumulates evidence for a choice (generically, let's call that “A”) against another choice (“B”). In the Newsome et al., experiments these would be a choice for, say, leftwards vs rightwards motion. The decision variable gets momentary evidence that is corrupted by noise (can be anything, but typically gaussian) which is integrated across time. This causes the decision variable to move randomly (brownian motion) until it hits (gets absorbed by) one of the decision criterion (bounds). Whichever bound it hits first is the decision that wins. When it hits gives you the reaction time. You should have the following picture in your head of a single trial:

The evolution of the decision variable is literally governed by integrating random draws from a gaussian distribution whose mean governs how much evidence is acquired in each time interval and whose standard deviation is associated with how noise that evidence is. So, if you have strong evidence for choice “A”, then you would have a large positive mean. For example, if a neuron in MT fires a lot in its preferred direction, then it will contribute a large mean and the trial to trial variability will correspond to the standard deviation of the evidence. Sometimes, due to the randomness of each sample from the evidence distribution, the decision variable may take a random walk to the other boundary and a wrong decision will be made. Note, that we are, for the purpose of this tutorial, always simulating a decision process in which the correct decision is “A” (upper bound), since we are adding a positive mean to evidence.

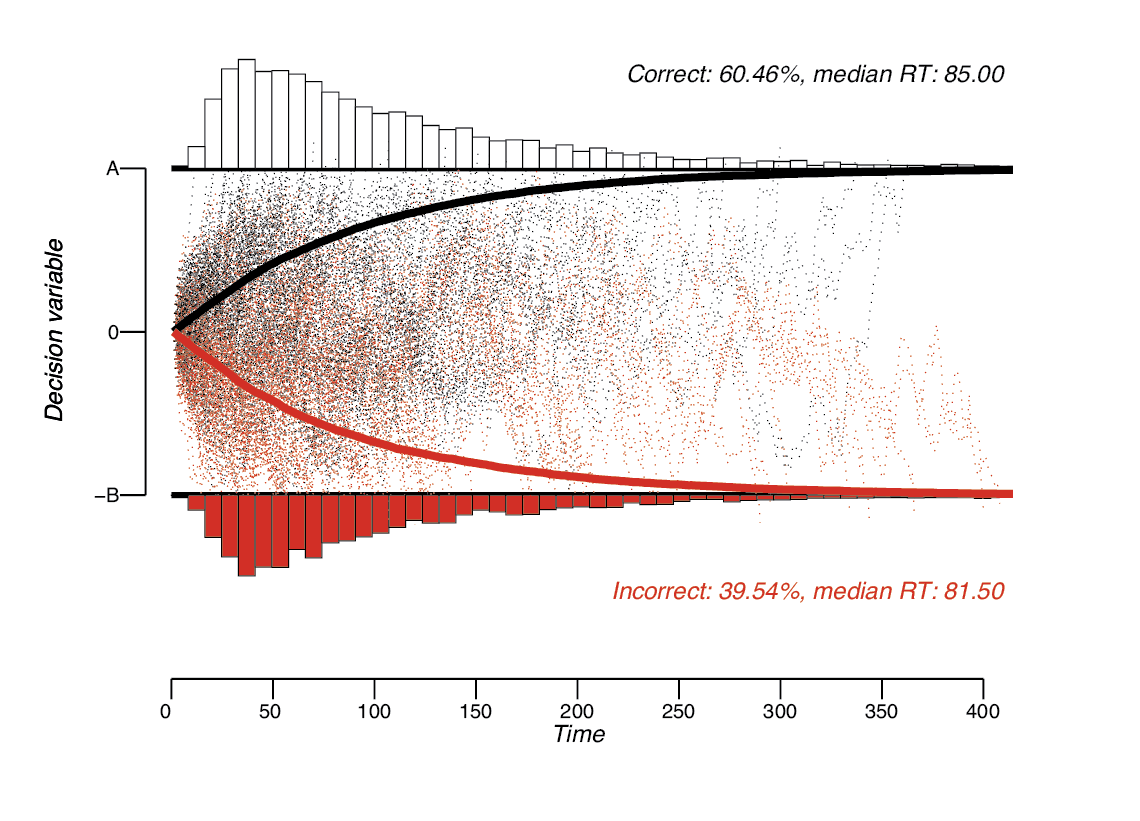

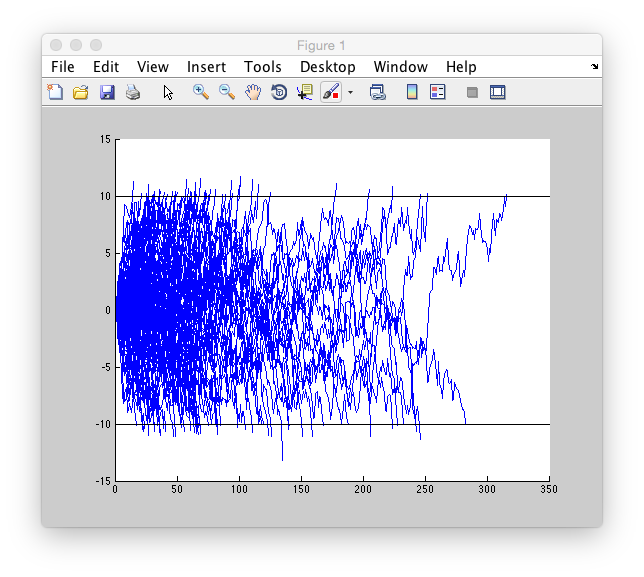

Across many trials then, having a small amount of moment-by-moment evidence (mean of the gaussian variable) relative to variability (standard deviation of the gaussian variable) accumulation will more often go to the correct bound then the incorrect bound. The time it takes to get to each bound (RT) forms a distribution and you can see that the distributions have a bit of a skew to them.

Ok. Get the basic idea? Let's try it out ourselves.

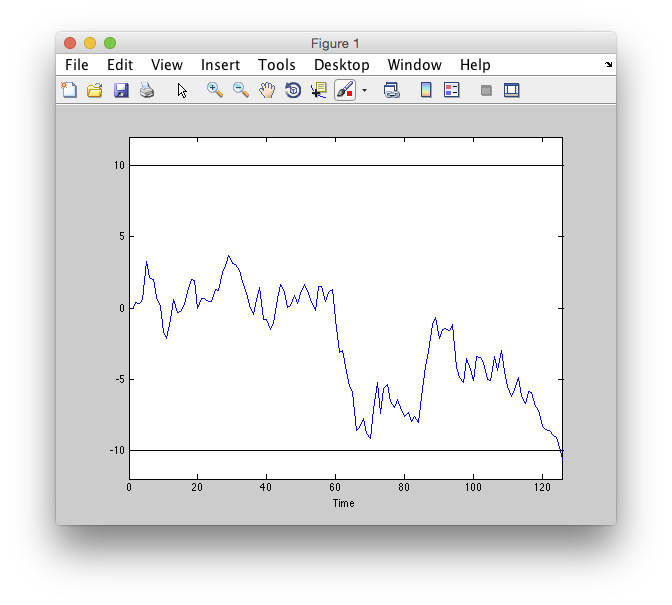

Simulate a trial

So, the idea is to have a decision variable that over time integrates information from a random gaussian process with some mean and standard deviation. Let's start by simulating that (arbitrarily) for a process with mean = 0.1 and standard deviation = 1. Let's have the decision variable, d, start at 0. We will stop the process when it hits either the top bound, A, or the bottom bound -B. For this case, we will just have those bounds equidistant from the starting point. So A=B, and we'll just stop the process when the absolute value of d gets bigger than A. Let's make A for this simulation 10. Got it?

If you know how to program this go ahead and do so. Otherwise, check the hints:

Now, go ahead and plot it.

Simulate multiple trials

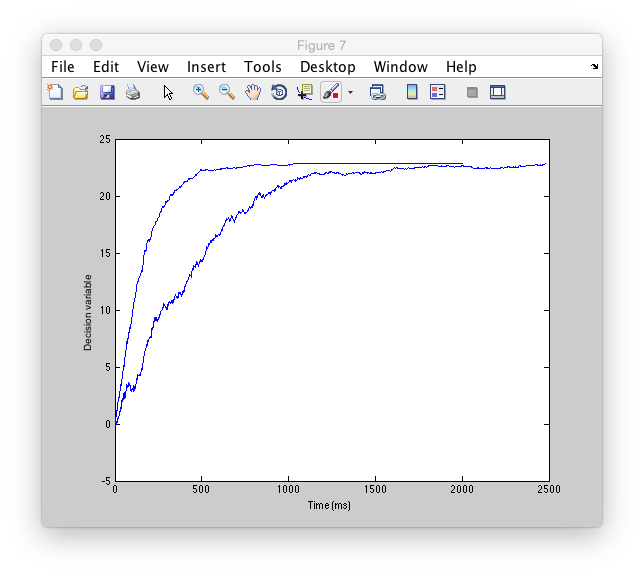

Ok, good so far? Let's try to run that process for multiple trials (say n=100) and plot each trial as we go along. We should also keep track of what decision was made (i.e. which bound was chosen) and when that decision was made. That way at the end we can look at the predicted percent correct and the reaction time distribution - these are the basic predictions of the model. So, go ahead and try to modify the code from above to do that.

Ok. So, let's see what we got. Calculate the percentage of correct trials vs the percentage of incorrect trials (what do you expect these to be like?)

And, let's look at the reaction time distribution (this might be more meaningful for the correct trials in which you have enough trials to be able to see what is going on.

What does the distribution look like? Is it gaussian? Is it skewed? Why does it have the shape that it does?

If you have that all working, then try the same simulation, but don't give any sensory evidence. Decisions are just based on chance fluctuations. You should expect percent correct to equal percent errors. Try it.

Ok, what percent correct and incorrect did you get. What happened to reaction times? Why?

The thing to note about this, is that even thought the gaussian generating process had 0 mean - you still ended up on each trial meandering to one of the bounds. Random walks don't just jitter around the starting place and never go anywhere - by integration of random values they end up, by chance, going somewhere.

Try playing around with the model in different ways - what does changing the height of the bounds do? Or changing the mean? Or, the standard deviation. What about using a different distribution, not gaussian (why might you want to do that?). What about the starting point of the process?

Ok, so you should now have a rough idea of how changing different parameters of the model can affect the accuracy and reaction times. So, er, Pop quiz, what parameters can you change to affect the accuracy and reaction time?

Fit behavioral data

Ok, so that's all great, but how do you fit this beast to behavioral data? Well, one answer is to do just what you have been doing - simulate many variations of the parameters until you get something that matches by some fit criteria (usually maximizes the likelihood of your data). A non-linear search algorithm can adjust the parameters for you to search the space of parameters more efficiently and you just let it go at it and come back some time later to see what it gets. While this may seem inefficient, there are times when you have to do this. In particular, if you make the model more complicated (various versions have been proposed in which you add randomness not just do the moment to moment evidence, but also to the starting point for instance) or if you want to fit the whole shape of your reaction time distributions you may wish to do a fit like this.

But, if you just want to fit your percent correct and mean reaction times. There are closed-form solutions that give you equations for how to do this.

This first equations tells you what to expect for the probability correct, given the bounds (assuming symmetrical bounds) A and the mean of the process μ. Where does this come from? Well it comes from a mathematical derivation which is a bit complicated (see: here for a helpful primer). But, you can think of it as the solution to the following problem. Given bounds A and a gaussian process with some mean and standard deviation, what is the probability that the process will end up at the top bound instead of the bottom bound. The solution is the above equation.

Let's take a look at the form of the equation and see if it makes sense. How does it change as you make μ bigger and bigger? You know what to expect, right? So, punch the equation into matlab and see what it does for different values.

What does it look like? Where does it start and where does it end? Does the shape make sense?

But, wait a second. What happened to the standard deviation? You know from above that it matters. But, it's not in the equation, why? Well, it actually should be. The full equation should read:

Trouble is, changing A and changing sigma amount to the same thing, just in opposite directions. They are both unobserved variables, so you cannot estimate both of them. So, you just estimate one and say that you estimate the ratio of the two. You might say the same thing about μ and A, but take a look first at the solution for when on average you hit the bound:

Ok, what shape does that give you? Well, plot it as a function of μ just like you did for the percent correct equation and see what it looks like.

What do you get for that? Does the shape of it make sense? What happens as mu gets larger and larger?

One thing to note here. This is the average reaction time. Remember that the distributions from above look skewed? This does not tell you anything about the skew of the distribution, just the central tendency. The model actually predicts a particular skewed shape of the reaction time distribution and that may be important. It is not fit, when you use the above equation.

Another thing. What are the units here? We want them to be in milliseconds, for example. If the monkey takes, say, 800ms to make the decision, then this equation needs to be fit such that you get a value of 800. Right? So, change A and see what happens. Can you get a value of 800 out of the equation?

You see that you get some value of mu that will give you 800 ms? You can now imagine playing around with values of mu and A to fit your reaction time data. But, what's the longest reaction time you should expect for some A and mu? Is it actually plotted on the graph?

But, we may want to know what to expect for a reaction time when mu is 0 and there is no sensory evidence (this is a very important case, right? Remember how choice probabilities were calculated in the Newsome experiment?) So, to do that you need to take limits:

Ok. Good, so now we have an expression for the reaction times as a function of all possible μ

But, hey, wait a second. Notice something else here? What value is the shortest reaction time that the model predicts?

But, this isn't really true for behavioral data. There must be some inherent delays associated with the time visual input is transduced and motor delays, at least, right? So, to fix that people put in, a, well, fudge factor - an independent “non decision” time. So, you get another free parameter to fit:

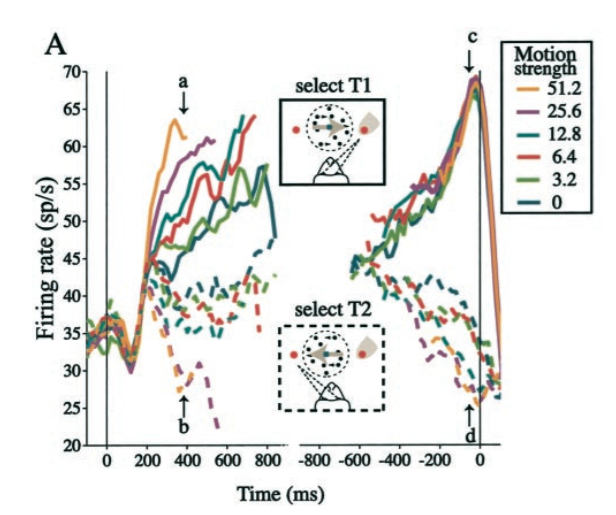

Fit behavioral data from motion task

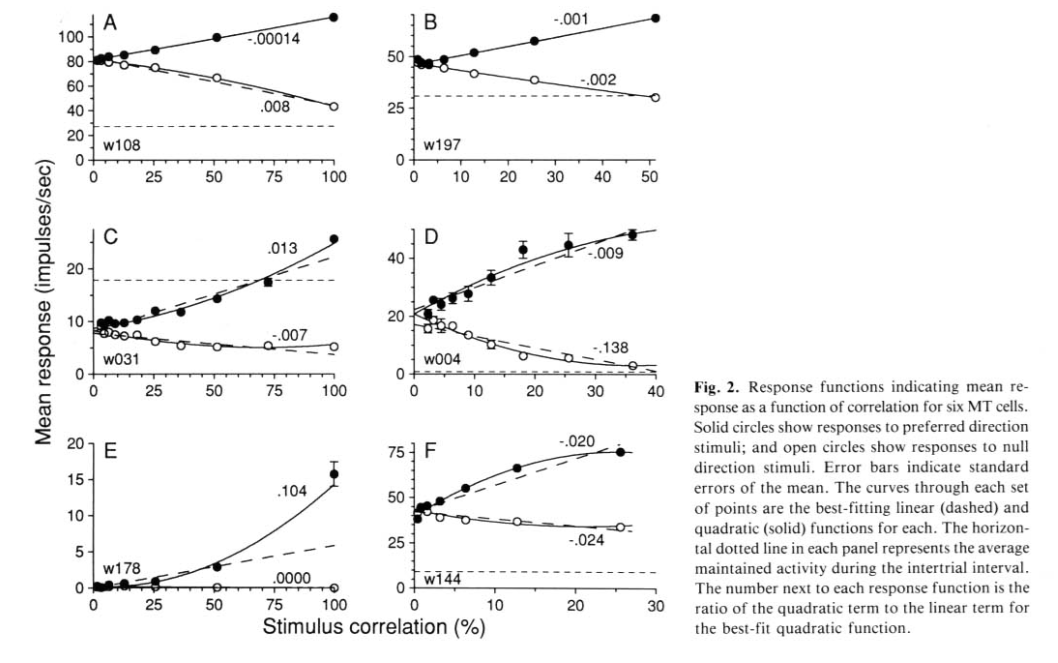

From the above, we now know that to fit behavioral data, we have two free parameters μ and A. How do Shadlen et al., fit their behavioral data? Well they know that as you increase motion coherence, that neurons in MT increase their response approximately linearly Britten KH, Shadlen MN, Newsome WT, Movshon JA (1993) Vis Neurosci 10:1157–1169 <pdf>. Here are a few examples of MT neurons responding increasing (or decreasing) as motion coherence is increased in their preferred (or anti-preferred) direction:

So, they assume that increasing coherence (C) increases the μ of the evidence process linearly. You need a scaling parameter, k, to get the parameters right. So, the equations they use, are simply the ones from above where μ has been replaced with kC (remember that C is known to them - it is the motion coherence that they used).

Pop quiz. What would you do if you were trying to model decisions and you changed image contrast, not coherence?

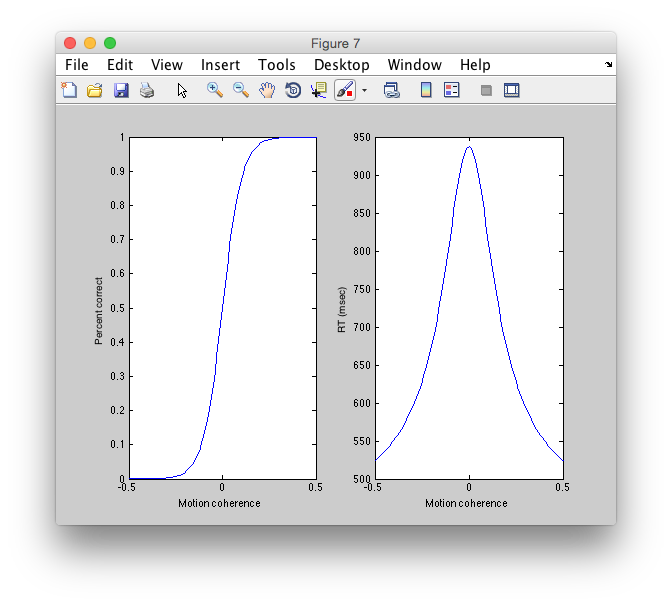

So, let's take some values from fits to monkey behavioral data from Huk & Shadlen, 2005, Figure 3. Where k = 0.426, A = 22.811 and tau (non decision time) = 417ms. Coherence of motion is varied from 0 to .50. What do we get?

It is convenient to plot on the same graph, the performance for the opposite motion direction as well (typically because we are studying a neuron and we want to know about choices made in the preferred direction vs the anti-preferred direction). When we do this, by convention, we call negative motion coherence more evidence for motion in the anti-preferred direction and we plot as a function of choices to the preferred direction. Try and plot the full curve this way.

So, that is the solution using the equations. Does it actually match our simulation? Well, try it out. Set the parameters from above, for a low coherence, say 0.05, and see if you get the expectation from the equations. BTW, what is the expectation?

So, run the simulation now with the parameters we have, and see what pCorrect we get.

What does this imply about the underlying decision process? Well, let's see what on average the process looks like for. To do this, simulate the decision process from above, but this time keep a copy of each actual decision process (just for correct trials), so that we can average and look at it afterwords. That means instead of one array d, we should make a matrix d with rows equal to trials of the decision process and columns equal to time points. Note this is a bit tricky, since each decision process can last a different amount of time. In the end, we will put the value of the bound into each decision process for the time that it terminates to the end of the simulation time (since the assumption is that the process is just absorbed into the bound). This all can be done with a few modifications of the code from above. If you know what to do, go ahead and do that.

Now, try running the same simulation, but set the coherence higher, to say 0.2, which should make the task much easier and plot that on the same graph. What does it look like?

If you do that across the coherences used in Roitman & Shadlen, 2002 pdf, would it look like what they recorded from LIP?