Table of Contents

Parietal and prefrontal: categorical differences?

Daniel Birman & Justin L. Gardner

Department of Psychology, Stanford University, Stanford CA

Intro

Here we run an informal experiment to test out intuitions about whether human subjects can easily learn delayed match-to-sample and category tasks implicitly. We have participants learn the two tasks on Mechanical Turk. We found that humans learned the categorical task only with considerable difficulty, converging on incomplete superstitions about the categorical rule. In contrast, participants learned the match-to-sample task almost immediately and achieve near-perfect accuracy within only a few dozen trials.

You can try out the experiment for yourself online! Take a look at the stimulus and read the instructions below first (Fig 1), then to try the match-to-sample task go here, and to try the match-to-category task go here.

The data, analysis, and results listed here have not been subject to peer-review and are provided to stimulate thought and discussion. See the full commentary for more details.

Methods

Human subjects

197 human subjects were recruited via Amazon's Mechanical Turk to complete a delayed match-to-sample and delayed match-to-category task. Participants were restricted to ages between 18 and 50. For the delayed match-to-sample task the mean age of participants was 33 +- 8.8 years and there were 27 male and 18 female participants. For the category task the mean age was 36 +- 11.1 years and there were 32 male and 32 female participants. Participants were paid $8/hr for their time. All subjects gave prior informed consent and all experimental procedures were approved in advance by Stanford University’s Institutional Review Board.

89 participants (45%) began the experiment and then dropped out. Eight dropped without starting the experiment. We received nine emails from participants who dropped out reporting technical problems (e.g. the experiment didn't launch due to an error, or they input their screen size incorrectly so that the stimulus size calibration failed), two participants reported running out of time (due to long breaks), leaving 70 participants who dropped out for unknown reasons and did not contact us to report an error. A possible reason for dropout is that the experiment took 30-45 minutes, a longer time than typical experiments on Mechanical Turk, which last 1-10 minutes.

After participation, subjects were asked to report details of their experience running the experiment. 5% of participants reported that the screen had jitter, dropped frames, or other rendering problems. 92% of participants reported fixating the cross, although this was not explicitly asked for. 29% of participants reported eye jitter due to the translation of the motion stimulus. 6% reported having done a similar experiment in the past. Workers were blocked from repeating any of the tasks more than once on the same Mechanical Turk worker ID.

Behavioral Task

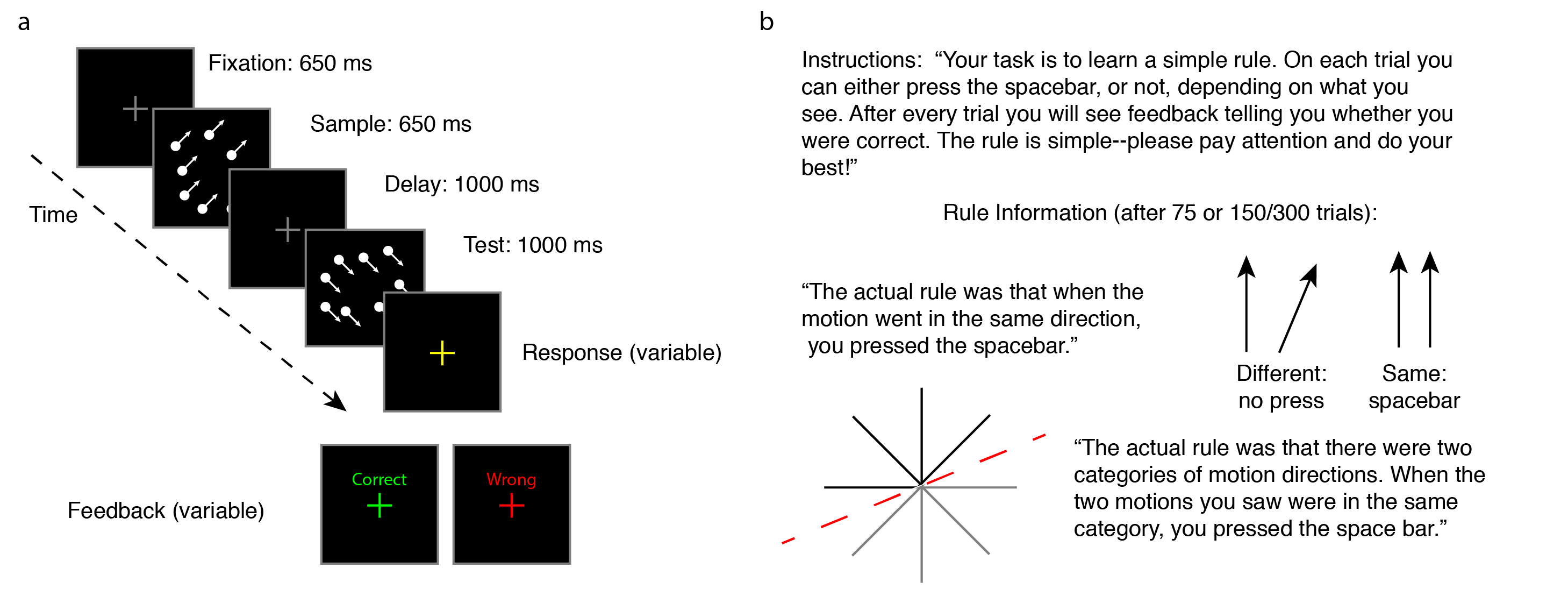

Human subjects performed versions of the direction match-to-sample and categorization tasks adapted from Sarma et al. In both tasks, subjects saw two short presentations (sample and test) of full contrast random moving dots which moved with full coherence in one of eight equally spaced motion directions (Fig 1a). The task was for participants to press the spacebar when the test stimulus either was in the same direction (match-to-sample task) or same category (category task) as the sample stimulus. Dots were full contrast white (0.1 degree diameter, 2.2 dots / degree^2) presented on a black background around the fixation cross in a 9 degree radius circle. Dots moved at 12 degrees per second. A fixation cross (1 deg x 1 deg, 0.1 deg line width), was presented throughout the trial.

Subjects were not instructed on how to perform the task, but were given feedback at the end of each trial as to whether the trial was performed correctly or incorrectly. The only instructions that participants received were to use the spacebar and that a simple rule existed (Fig 1b).

We ran three groups of subjects who performed either the delayed match-to-sample or categorization task. To limit the length of experiments, we trained subjects on either the delayed match-to-sample or categorization tasks, but not both. On delayed match to sample, subjects ran 75 trials, then were told the rule explicitly and performed 50 additional trials (Fig 1b). For the categorization task we ran two groups of subjects, one which performed 150 categorization trials and another (long group) that performed 300 categorization trials. We then informed both groups of the categorization rule and had the perform another 60 trials.

The match-to-sample and categorization tasks were both performed on identical stimuli, but the pairs of directions shown on each trial were different between tasks. In the match-to-sample task the sample direction was chosen from the eight cardinal directions and the test direction was either a match or a non-match. Non-match directions were chosen from the fixed intervals +-22.5, 45, or 67.5 degrees in order to obtain data for individual psychometric functions. In the categorization task the sample and test directions were sampled from the eight cardinal directions. The category boundary was the same for all participants and was the line at an angle 22.5 degrees above horizontal (Fig 1b). Participants saw an equal proportion of match and non-match trials in both tasks. To ensure that participants in the categorization task had sufficient information to learn the task we optimized the presentation of error trials in the 300-Trial categorization task. Directions were chosen such that participants would see and get correct all of the 8 x 8 direction combinations before they would begin seeing repetitions of trials they had already completed.

Figure 1: Stimulus and instructions. (a) Trial sequence and timing of events. Note that after each block, the response and feedback segments of the trial were gradually shortened. The response segment was initially 2500 ms and was shortened to 1200 ms over time. The feedback segment was initially 1500 ms and was shortened to 550 ms over time. (b) Instructions. Participants were shown identical instructions in both the delayed match-to-sample and category tasks. After an initial learning phase of 50 (match-to-sample) or 150/300 trials (category) they were explained the actual rule and completed a final block of 50 or 60 trials, respectively.

Analysis

To obtain a between-subject estimate of the angle threshold at 75% performance we parameterized performance as a function of angle difference in the directions task with a 3-parameter sigmoidal function, fit across the data obtained from all of the subjects. We extracted the threshold estimate from the fitted function (Fig 2b):

For the visual display of human learning in the category task (Fig2c) we fit individual subject data with logistic regression models:

To compare performance across subjects between the task conditions (Fig 2d) we computed a linear model, testing for the effect size of the coefficients and interaction terms. We coded the task variable (Direction, Category: Short, Category: Long) in two contrasts, Task1 was used to test for differences between the direction task and both category tasks, Task2 was used to test for a difference between the short and long variations of the category task.

Stimulus Display

Participants self-reported their screen size and their display was calibrated under the assumption of a 61 cm viewing distance. Screens were limited to laptop and desktop computers running the Firefox and Chrome web browsers, tablets and smartphones were excluded from participation. Stimulus presentation was performed via custom javascript code (JGL) transferred to participant's computers by a server running Psiturk

Results

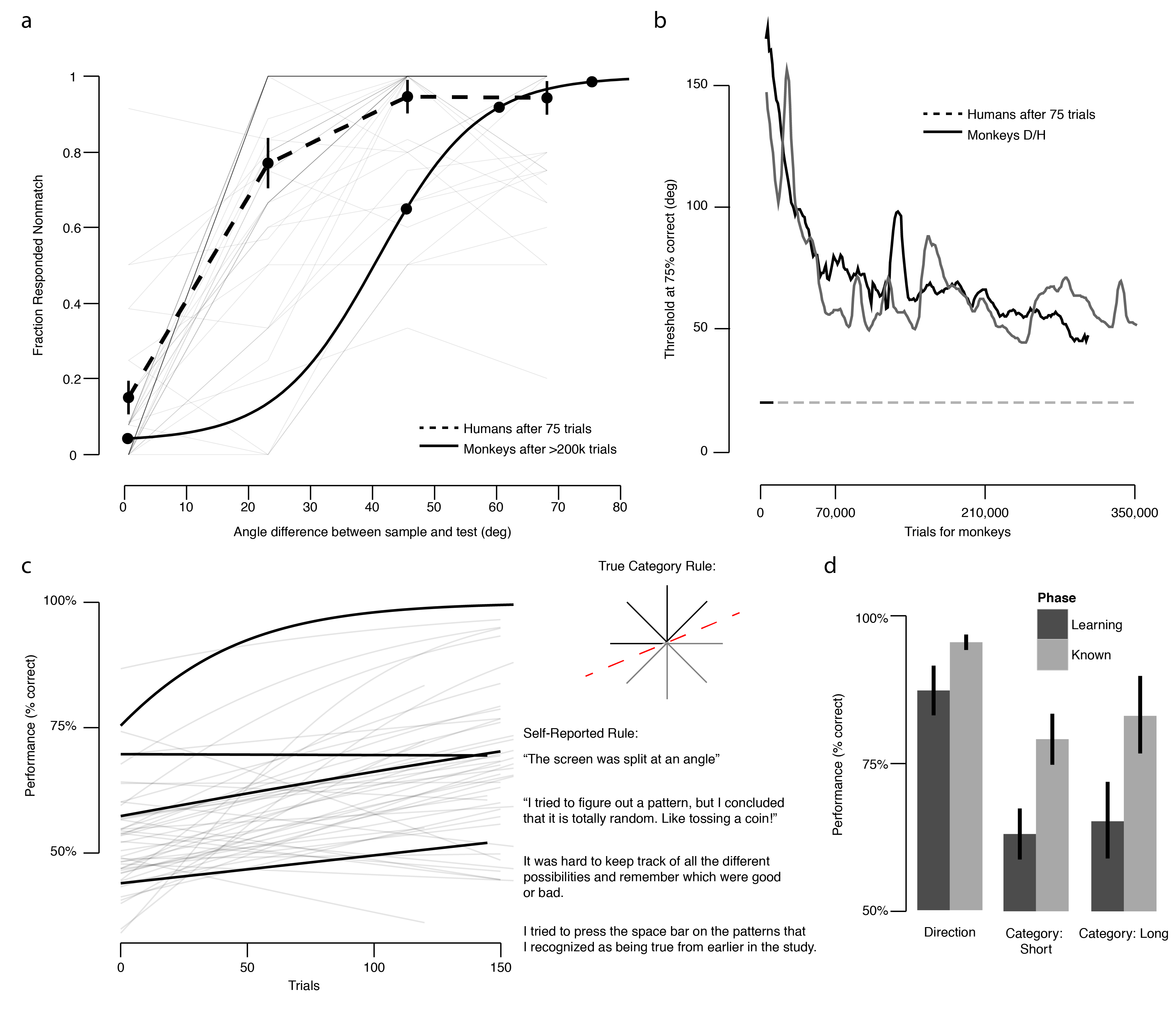

We found that human subjects easily learned the match-to-sample task, unlike the monkeys in Sarma et al., but that human subjects were qualitatively more similar to monkeys in the categorization task. To assess whether participants learned the correct rule we restricted our data to participants who self-reported partial or complete understanding, and counted the number of participants who verbalized the rule as some form of: “if they were the same [direction] I pressed the space bar” (Subj 41). In the match-to-sample task 93% of participants self-reported understanding and were able to verbalize the correct rule after 75 trials, prior to being informed about the rule. Human threshold performance (difference in sample and test direction needed for 75% correct performance) was 17.39 degrees, computed as the fit across subjects of a sigmoid function (R^2 = 0.56). This was considerably better than monkeys (Fig 2a) who showed asymptotic performance near 50 degrees. In summary, we found that within 75 trials human performance is better than monkey performance after hundreds of thousands of trials (Fig 2b). This finding confirms the intuition that monkeys and humans perform the match-to-sample task in profoundly different ways.

In the both the short and long groups of the categorization task we found that less than 10% of participants were able to verbalize the rule after the learning phase. To assess subject understanding we re-coded participant responses into three groups: learned, e.g. “Screen was split on an angle” (Subj 110), partially learned “If the direction was moving in a similar direction. For instance down and right and down and left = space bar; left and right = no space bar; left and left and down = space bar; down and up = no space bar.” (Subj 106), or not learned “I was really unable to find a rule other than that if they both went in the same direction, I should always hit space”. Of the 47 participants who were in the short group which consisted of 150 learning trials, 9% understood the rule, 28% were on the right track, and 64% had no idea or only knew that matching directions worked. Of the 16 participants who were in the long group (300 trials), 6% understood the rule, 50% were on the right track, and 44% had no idea or only knew that matching directions worked. We found that participants' performance matched their self reported rules (Fig 2c) and that participants who did well were consistently able to verbalize a rule similar to the true rule. In both tasks participants were more successful after being informed (Fig 2d); we found that overall the category task was considerably harder than the directions task. In summary, we found that human performance on the categorization task qualitatively resembles monkey performance–when given only implicit feedback humans do not learn categorical rule boundaries with ease.

Figure 2: Results. (a) Human and monkey performance in the delayed match-to-sample task. Performance on the match-to-sample task as the proportion of responses for non-matches, as a function of the sample-test angle difference. Monkey data is reproduced from Sarma et al. Fig 2. where SEM bars are too small to be visible. Individual human curves are shown as grey lines in the background and a between-subject average (+-SEM) is shown as a dashed line. 13 subjects showed perfect performance, their overlapping lines appear darker on the figure. (b) Human and monkey learning. Monkey data is reproduced from Sarma et al. Suppl Fig 2, showing asymptotic performance at a threshold of approx. 50 degrees. Total trials are an estimated number computed by multiplying an average (1400 trials per session) by the number of sessions. Human data shows the threshold performance after 75 trials, 17.39 degrees. (c ) Human learning in the delayed match-to-category task. Human learning in the category task is shown as logistic regression fits, estimating performance (percent correct) across number of trials. Four subjects are highlighted and their self-reported rules are displayed. Overall less than 10% of subjects learned the rule and were able to verbalize their understanding. (d) Informed Learning. Average performance across subjects (Mean +- 95% CI) is shown for each of the conditions in the last third of trials (learning phase) compared to after being informed about the rule (known phase). We fit a linear model to the data to test the prediction that performance improved after introducing rules. We found the grand average performance to be 72% across all of the data. We found that overall the directions task was easier for subjects, with a 7.7% performance difference compared to the category task (t(205)= 8.526, p < .001). Introducing rule information improved task performance for both tasks (t(205)=7.035, p < 0.001), with a slightly larger effect in the category task (15.6%) compared to the direction task (12.7%) (beta_task1*phase = 3.0%, t(205) = 2.309, p = 0.022). We found no difference between the longer and shorter category task, although a trend towards increased learning with longer time exists. We did not exclude participants who were unable to perform the task to avoid biasing the results; there were significantly more of these participants in the categorization task than the directions task (X^2(2) = 32.576, p < 0.001).

Public Data and Code

The entire experimental code, raw data, and analysis scripts are hosted in an online repository.

References

Sarma, A., Masse, N. Y., Wang, X. J., & Freedman, D. J. (2016). Task-specific versus generalized mnemonic representations in parietal and prefrontal cortices. Nature neuroscience, 19(1), 143-149.